Who this is for: Anyone who builds presentations for work — pitches, reports, client decks, internal updates — and wants to know which AI tool actually saves time without producing slides you're embarrassed to share.

What you'll learn: What each tool is actually built for, a side-by-side comparison across the parameters that matter, and a clear decision framework so you don't use a research tool when you need a design tool.

TL;DR — Too Long Didn’t Read

Skip the guide and do this:

Connect Bright Data to Claude via MCP today. It takes about 15 minutes to set up. Once it is active, Claude can pull live social data directly during your conversation.

Scrape your own profile first. Before looking at competitors, pull your own data. Seeing your engagement rates, top posts, and caption patterns through this lens is often the most clarifying first step.

Let Instagram's Related Accounts data find your competitors for you. Tell Claude to pull the Related Accounts list from your profile. Instagram's algorithm tells you exactly who shares your audience — you do not have to guess.

Ask Claude one specific question, not a general analysis. "What content patterns are working for this creator that I am not using?" gets better answers than "analyze this profile."

Start with the lowest-effort, highest-certainty idea first. If you already have a content series that is working, replicate the format for a new topic before trying anything new. The data shows you what is working. Start there.

The total cost of our test run was $0.01. The output was an 8-page analysis with 10 evidence-backed ideas and a four-week implementation plan. The only question is whether you run it.

Three Levels of Content Scraping

Before getting into the workflow, it helps to understand what your options actually are. There are three practical ways to scrape social media for content research today.

Level 1: Bright Data* | Level 2: Apify | Level 3: Bright Data + Claude MCP | |

|---|---|---|---|

What it does | Scrapes structured data from social platforms | Runs pre-built scrapers for quick data pulls | Scrapes data AND analyzes it with AI in one flow |

Output | Clean JSON/CSV data | Clean JSON/CSV data | Strategic insights, comparisons, content ideas |

Analysis included | No — you do it yourself | No — you do it yourself | Yes — AI-powered |

Skill needed | Low-medium | Low | Low (once set up) |

Best for | One-off data pulls | Quick beginner jobs | Full competitive intelligence |

The core difference is not the scraping. All three tools can pull social data. The difference is what happens after.

Raw data is noise. A spreadsheet of 500 Instagram posts with like counts tells you almost nothing actionable. What you actually need is something that can read all that data, compare it to your own content, identify what you are missing, and generate specific ideas adapted to your brand.

Level 1 and 2 give you data. Level 3 gives you intelligence.

*Bright Data is a web scraping platform that pulls structured data from sites like Instagram, YouTube, and TikTok — so you can analyze what’s actually working.

What Level 3 Actually Looks Like

When you connect Bright Data to Claude through MCP (Model Context Protocol), the workflow changes completely.

Bright Data collects the data. It connects to social platforms and pulls structured information — profiles, posts, metrics, captions, related accounts, timestamps — everything. Claude receives that data directly through MCP. No exporting. No copy-pasting. No file downloads. Then Claude analyzes everything: it reads every caption, compares engagement rates, identifies content patterns, spots gaps, and generates specific recommendations.

You ask a question. You get a finished analysis, not a spreadsheet.

The short version:

Bright Data gets the data

Claude thinks about it

The output is a content strategy, not a CSV

The Real Test Run (What We Actually Did)

Everything below happened in a single Claude conversation. No external tools. No manual data work. No coding.

The goal: Analyze the social presence of @digitalsamaritan across Instagram, YouTube, and TikTok. Auto-identify competitors in the same niche. Compare content strategies. Generate 10 evidence-backed content ideas.

Here’s how we did it:

What Bright Data scraped:

Profile | Platform | Result |

|---|---|---|

@digitalsamaritan | ✅ Success | |

@digitalsamaritan | YouTube | ✅ Success |

@nick_saraev (competitor) | ✅ Success | |

@theaisurfer (competitor) | ✅ Success | |

@digitalsamaritan | TikTok | ❌ Failed (account inactive) |

The MCP event log recorded 30 total API events across the session — 5 trigger calls and 25 snapshot polling requests. Four out of five completed successfully. All data returned as structured JSON directly into the Claude conversation.

The competitors were not manually chosen. Claude used Instagram's Related Accounts data returned by Bright Data to identify which creators share the same audience — then scraped those profiles automatically.

Total Bright Data cost for the entire run: $0.01

What the Data Actually Found

This is where it gets interesting. Here is a summary of the real findings from the analysis:

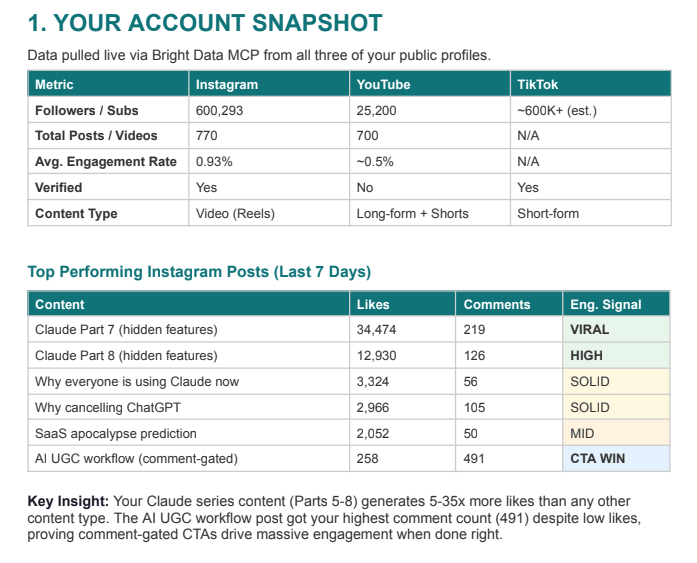

The account snapshot (@digitalsamaritan):

Metric | YouTube | |

|---|---|---|

Followers / Subs | 600,293 | 25,200 |

Total Posts / Videos | 770 | 700 |

Avg. Engagement Rate | 0.93% | ~0.5% |

Top performing posts in the last 7 days:

Content | Likes | Comments | Signal |

|---|---|---|---|

Claude Part 7 (hidden features) | 34,474 | 219 | VIRAL |

Claude Part 8 (hidden features) | 12,930 | 126 | HIGH |

Why everyone is using Claude now | 3,324 | 56 | SOLID |

AI UGC workflow (comment-gated) | 258 | 491 | CTA WIN |

The AI UGC post got 491 comments on just 258 likes. That single data point tells you everything about what comment-gated CTAs do to algorithm signal.

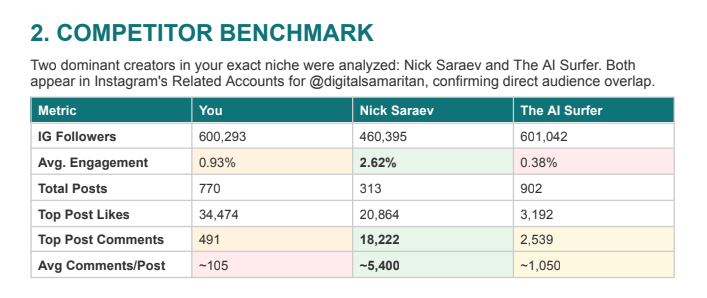

The competitor benchmark:

Metric | @digitalsamaritan | Nick Saraev | The AI Surfer |

|---|---|---|---|

IG Followers | 600,293 | 460,395 | 601,042 |

Avg. Engagement | 0.93% | 2.62% | 0.38% |

Total Posts | 770 | 313 | 902 |

Avg Comments/Post | ~105 | ~5,400 | ~1,050 |

The analysis showed that one of my competitors gets 51x more comments per post despite having 140,000 fewer followers. His engagement rate is nearly 3x higher.

Claude's analysis identified the reason: it is not content quality. It is content architecture. Every post is engineered around a comment-trigger CTA. That single structural difference creates an engagement flywheel the algorithm rewards at scale.

The Gaps Claude Found (That Were Invisible Without the Data)

Once the scraping was done, I gave Claude one prompt asking for a full competitive analysis. Here is everything it came back with — structured, specific, and ready to act on.

An account snapshot across all three platforms

Claude pulled my own metrics first — follower counts, engagement rates, post volume, top-performing content by likes and comments — and organised them into a clean comparison across Instagram, YouTube, and TikTok. Seeing your own data laid out this way is clarifying in a way that manually checking your profile never is. You notice things you had been ignoring.

A snapshot of my account from three channels

A competitor benchmark table

Claude identified two direct competitors from the Related Accounts data Instagram returned, scraped both profiles, and built a side-by-side comparison. Not just follower counts — engagement rates, average comments per post, caption length patterns, CTA structure, content themes. The kind of analysis that would normally take a researcher half a day to pull together manually.

The gaps became visible immediately. One competitor was getting significantly more comments per post despite having fewer followers. Claude identified exactly why: a structural difference in how every post was built, not a difference in content quality.

Five specific content gaps

Not general advice. Five gaps tied directly to the data — things I was not doing that the data showed were working for competitors, things I was underusing despite evidence they worked for my own audience, and platform-level gaps that the follower numbers made impossible to ignore.

Each gap came with the data point that revealed it. If Claude flagged something, it cited the number behind it.

Ten content ideas, each backed by evidence

Every idea was mapped to either a competitor pattern that was demonstrably working, one of my own posts that had outperformed everything else, or a gap in the niche that nobody was filling.

No generic suggestions. Each idea had a specific format recommendation, a caption structure, and a CTA built in. The kind of brief you would hand to a video editor or caption writer and they would know exactly what to make.

A prioritised execution plan

Claude did not just generate ten ideas and leave me to figure out the order. It ranked the top three by effort-to-impact ratio — lowest effort, highest certainty first — and mapped out a four-week implementation sequence with specific actions for each week.

Week by week. Which format to launch first, why, and what to measure.

The whole output came back as a formatted document. Eight pages. Ready to share with a team or drop straight into a content calendar.

That is what one conversation produced. One prompt for the analysis, after the scraping was done. The data did the work. Claude organised it into something usable.

How to Set This Up Yourself

Want the full breakdown?

This is where you get real AI workflows, prompts, and systems you can use to automate your work. If you're serious about using tools like Claude to grow your business, this is for you.

Unlock full access today